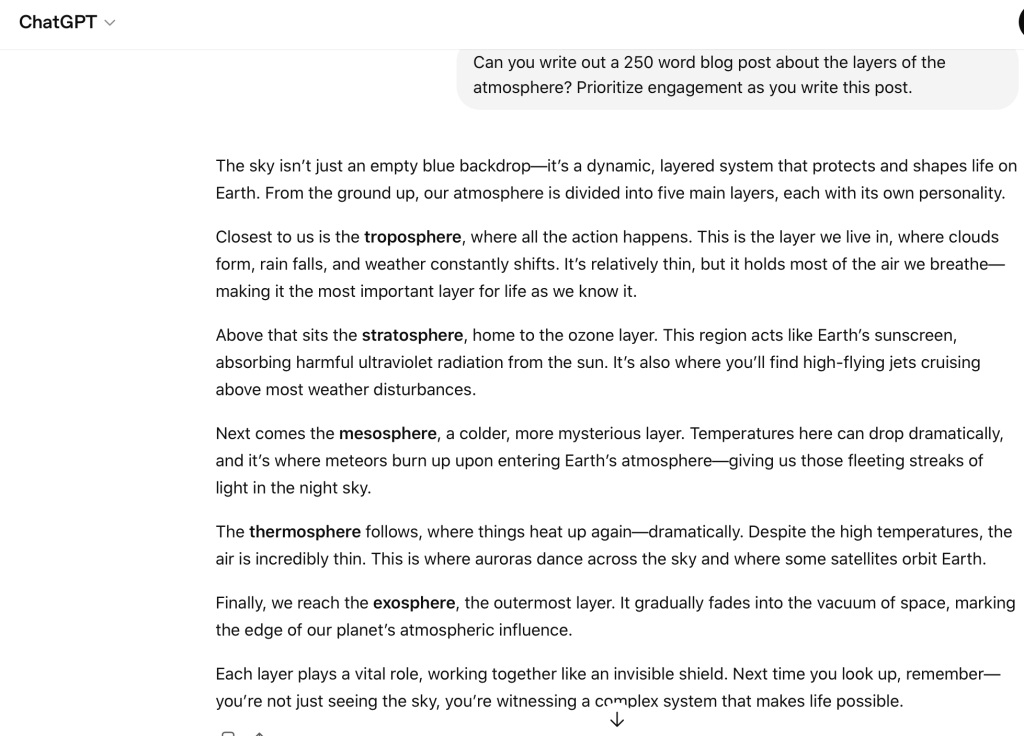

Experimenting with AI was an interesting experience. When I gave ChatGPT a prompt about the layers of the atmosphere, it created a pretty decent blog post that met the word count and essentially explained what each layer was. However, what I noticed is that although this AI tool was able to create a post relating to the layers of Earth’s atmosphere, the information was either inaccurate or surface-level. If I had asked for more detail in my prompt, it is possible that ChatGPT would have been able to supply that, but I can’t be for certain. I also noticed that nothing was backed up with any evidence. It was almost as if the system was pulling from a reservoir without actually knowing if what it was giving me was even trustworthy. There were no reputable sites attributed to the information, and almost every individual paragraph about the different layers had a stagnant flow almost. In my opinion, the way the blog post was written did not resemble what a human may have written. There were definitive signs that ChatGPT was not a reliable source or creator on its own. I wouldn’t say that I believe AI tools such as this are trustworthy. I fully believe that there has to be some human guide behind the scenes double checking all of the work and in many cases, feeding the tool the right information to push the content in a specific direction. AI cannot create accurate scenarios or supply factual information without the assistance of humans and manual research. Going back and editing the entire post was refreshing because I actually had evidence to back up the claims I had provided.

https://docs.google.com/document/d/1kLCXixGU_Pd7fi5r8y3hMTR494MPe3nbAMse4p6772c/edit?usp=sharing

Leave a comment